Like the previous HFM examples I am going back to basics and keeping it all very simple.

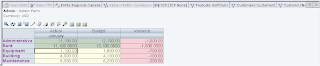

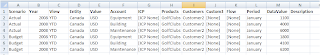

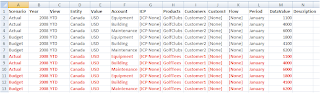

In this example I am going to extract data shown above for Account :- Equipment, Building & Maintenance. As I created a file DataStore that matches all the columns for a HFM application in the last blog I am going to re-use it.

If you want to know how I created the DataStore just have a quick read of my previous blog.

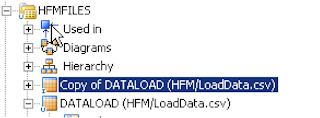

First I right clicked and duplicated the original flat file based DataStore I used for loading data.

The DataStore just required renaming (DATAEXTRACT) and giving a new resource name, in this case ExtractData.csv

If you don’t mind your extract file template being the same this is a quick and easy way to keep reusing DataStores, if you have to have a specific output template then still just duplicate a full existing DataStore and delete the columns that you don’t require.

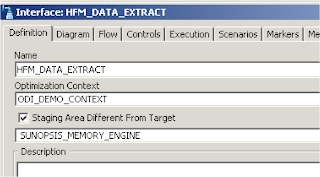

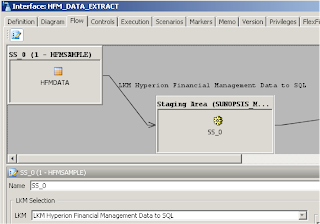

With the DataStore in place an interface can be created to extract to the data.

I am using the memory engine as the staging area because the complexity and size of the output is small, in any other circumstances I would use the power of RDBMS engine as the staging area technology.

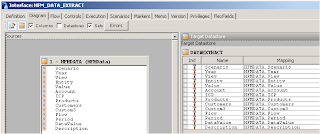

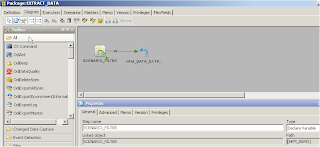

The HFMData DataStore was dragged on to the source, the newly created file DataStore was dragged on to the target. As all the source and target columns matched and there are no transformations required everything was auto mapped.

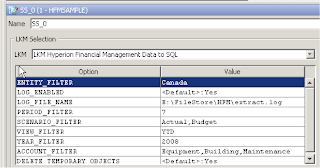

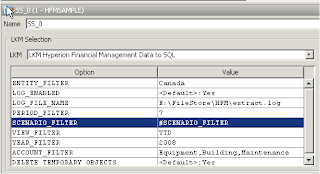

In the flow area make sure “LKM Hyperion Financial Management Data to SQL” is selected, as it may not have been select automatically, now there are a number of options available for the KM.

ENTITY_FILTER—The Entity dimension members for which you are exporting data

You can specify comma-delimited entities or one entity. To specify the parent and child separate them with a period; for example, Regional.Canada. If you do not specify any entities then data for all entities will be exported.

PERIOD_FILTER—The set of Period dimension members for which you are exporting data

Specify a range of members using the ~ character between start and end period numbers for example, 1~12. If you do not specify periods, the system exports data for only the first period defined in the HFM application

SCENARIO_FILTER—The Scenario dimension members for which you are exporting data

You can specify comma-delimited Scenario members or one scenario. If you do not specify any scenarios then data for all scenarios will be exported.

ACCOUNT_FILTER—The Account dimension members for which you are exporting data

You can specify comma-delimited accounts or one account. If you do not specify any accounts the data export will be for all accounts.

VIEW_FILTER—The View dimension member for which you are exporting data

Examples are Periodic, YTD, or

YEAR_FILTER—The Year dimension members for which you are exporting data

You can specify comma-delimited years or one year. If you do not specify any years then data for all years will be exported.

LOG_ENABLED - If set to Yes, logging is done during the extract process to the file specified in LOG_FILE_NAME.

LOG_FILE_NAME - The name of the file where logs are saved.

I just updated the options to match the POV of the data I want to extract. The period filter was set to 7 as the start period for the HFM application is July and I want to export data for January.

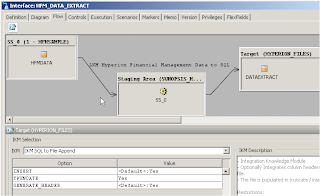

As the target is going to be a flat file I used the IKM “IKM SQL to File Append”, TRUNCATE was set to Yes so the file is generated each time the interface is executed, if I wanted to append then I would just set the option to No.

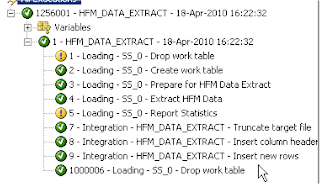

The process creates a temporary table using the memory engine, the data is exported into the temporary table based on the options in the KM, and the data is then moved from the temporary table into the flat file.

The required data from the form at the beginning of this blog has been extracted.

As there are no options in the KM to filter such areas as custom dimensions then if you have any data in these dimensions then it all will be extracted.

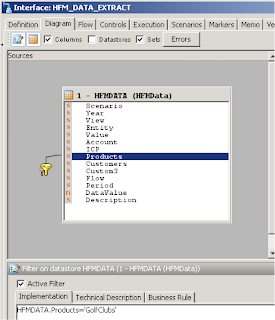

You can get around these by using filters on your staging area.

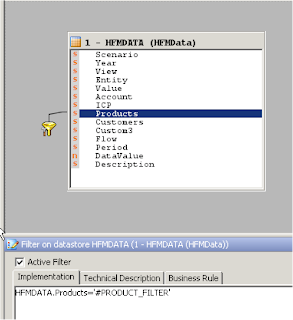

By dragging the “Products” column on to the source a filter is created, once it has been created you can create logic; in this example data will be filtered to only return products that equal ‘GolfClubs’.

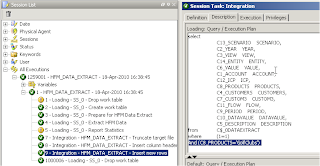

If you look in the operator at the step where data is inserted into the file from the staging area you will see the filter in the generated SQL.

You could take this one step further and use a variable instead of hard coding a value in the filter.

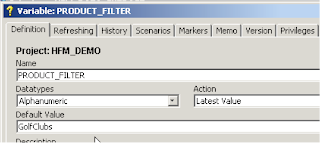

An ODI variable was created to hold the value for the product filter.

You can use the variable directly in the filter, this is achieved by using #VARIABLENAME

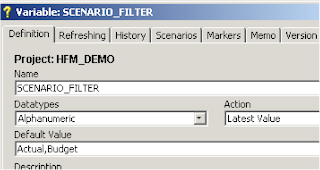

You can also use the same concept with IKM options, say that the “Scenario” data to extract was not always constant you could create a variable to set the member(s).

You can then add this variable to the IKM options

When you use variables in a KM option you cannot just run the interface as it will fail.

To get around this you need to declare the variable first before using it and this can be done in a package.

Once you create a new package you can just drag the variable on to the diagram and declare it, then drag the interface and join the steps together, using this method will not cause the interface to fail.

Well that concludes exporting data from a HFM application, yes it is basic but it should give you a head start and soon you will be on your way to creating much more complex solutions.

Great post. Is it possible to extract meta-data from HFM using a similar approach? I havent tried, though of checking with you.

ReplyDeleteHi, you can only extract member lists from HFM.

ReplyDeleteHi John, wondering if you have run into the issue of "view" being a reserved keyword. we are using the same process as you have outlined only the underlying database is MSSQL, when it tries to write to file it errors. The auto-generated ODI SQL wants to rename the column to view which errors out being a reserved word. Any ideas ?

ReplyDeleteEXCELLENT Blog - this is the holy grail. 2 questions: (1) is it possible to extract journals via the HFM to SQL KM? (2) is it possible in a filter (or elsewhere) to use expressions availabe in the hfm extract UI, such as [Base] member list? i have not had any luck here and didn't want to maintain this in a table.

ReplyDelete