So what is Lifecycle management, well as the documentation outlines it’s a way to migrate applications, repositories and individual objects (known as artifacts) across product environments and operating systems.

It has been around since system 9 albeit in command line form and I must admit I knew about it existence but never really tried it out as it never looked to cover enough products, well know it has been given a complete overhaul, beefed up and been integrated into the Shared Services console, there is even a mini Java API for it.

In V11 LCM looks like to cover the main product set for migrations so if it does what it says on the tin then it could be a very beneficial tool.

LCM supports two methods of migration:-

Application-to-Application – If the source and destination registered with the same instance of Shared Services.

To and from the file system

I am going to concentrate on migrating a Planning application using both methods, I will stick with tradition and use the sample app.

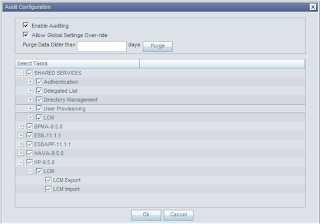

A new feature in Shared Services is the ability to run auditing and included in this is LCM auditing, it is turned off by default so I turned on full auditing but you can pick and choose which areas you want to audit.

You have to log into HSS as the admin and choose Administration > Configure Auditing.

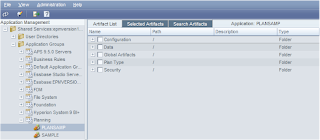

To start using LCM all you need to do is expand Application Groups, select the product area and then the application.

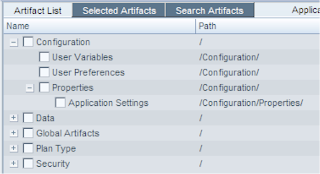

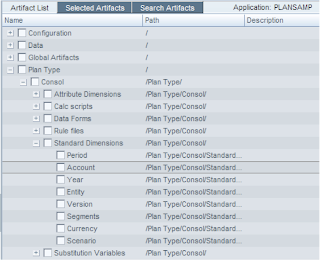

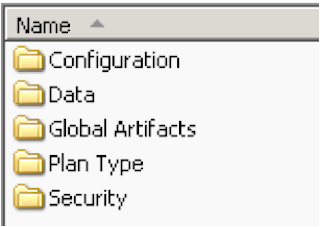

You will be confronted with the Artifact selection screen; it is broken down into specific areas for Planning this is useful as you don’t always want to migrate everything from environment to another.

Configuration

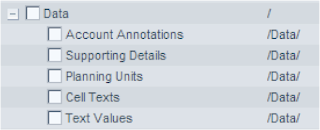

Data

This relates to data sitting in the relational side of planning and not the essbase side, you can’t actually migrate the essbase data using LCM.

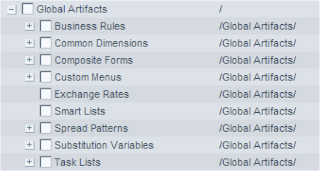

Global Artifacts

Some of the sub sections of Global Artifacts can be expanded such as Business Rules that breaks down to all the areas that you would of used EAS in the past. I am not quite sure of Common Dimensions yet I would of thought they would have been more in line with EPMA but EPMA has its own LCM area. I will update once I find out. There is even to the option to migrate individual Substitution variables.

Plan Type

Plan Type breaks down into each database, as I am using the sample application it only has one. You will notice there are duplicate areas from what is in Global Artifacts this is just down to how you have assigned things, take Substitution variables if you set them to be across all dbs in the application they will go against other they will appear against the database.

At last a quick and easy way to export/import planning hierarchies?

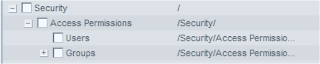

Security

This will let you migrate security on dimensions, forms, folders and task lists for groups and users, so cuts out the need to use the exportsecurity command line utility.

So really it looks like LCM has amalgamated many of the new and existing command line utilities in the planning bin directory into an easier to use web front end.

Some points to note if your users don’t exist in your target application you can either set them up in Shared Services or use the Foundation section of LCM, you select multiple LCM Application Groups so you can export Users at the same time as Planning artifacts.

The migration does not create the planning application your target application will already need to exist.

The first migration I am going to attempt is application to application.

I created another blank planning application named plansam2, there are a few requirements if you migrate a planning application :-

Make sure the shared services artifacts have been migrated (users,groups & provisioning)

Plan Types must match

Start Year, Base time period and start month must match.

Dimension names must match

If it is a single currency app then it should be of the same type.

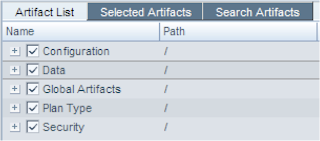

Selected all Artifacts

Define Migration

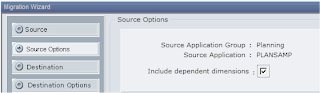

Make sure you tick include dependent dimensions, the first time I ran a migration I never ticked this and a number of forms did not get imported because it pointed out that members did not exist. I assume if your migration includes dimensions then you need to tick this otherwise the dimensions wont get imported first.

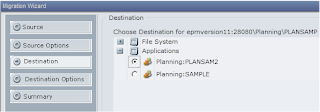

PLANSAM2 was selected as the destination application.

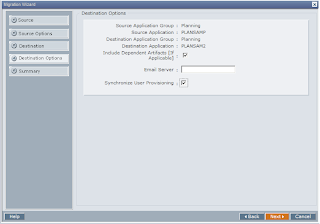

Include Dependent Artifacts is selected as default

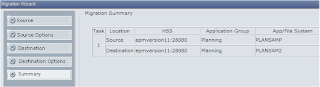

Summary of the migration.

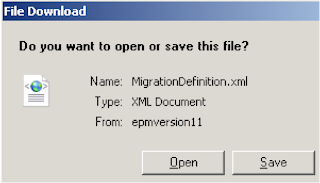

At this point you have the option to either execute the migration or save the definition. I choose the save option first.

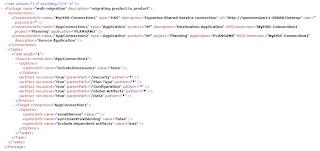

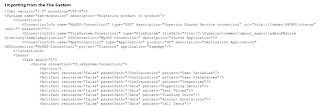

An XML file is generated

As an xml file is produced it can easily be edited if changes are required.

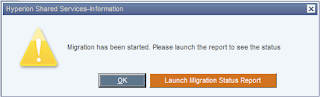

On clicking the status report option a screen displays the active state of the migration.

Besides the logs in the console you can view at :-

\Hyperion\logs\SharedServices9\SharedServices_LCM.log

If you are interested where the migration report information is stored then have a look at the table LCM_Migration in the HSS repository.

You will also find the action logs and definitions for the migration in \Hyperion\common\msr

plus there are some logs in the \migration directory below which are user related.

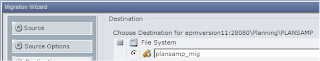

So that’s app to app now what about app to file system.

Well you follow the same process but at the destination section of defining the migration you choose the file system option entering a folder you wish the exported files to go.

Like with before you can save the definition file once you have completed the wizard.

The files will be exported to

\Hyperion\common\import_export\

So in my case

\Hyperion\common\import_export\hypadmin@Native Directory\plansamp_mig

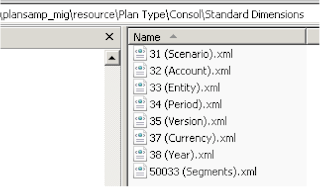

Under that folder is a folder name resource with further directories relating to the migration artifacts tree in HSS

Most the exported artifacts are in xml format, there is a useful table in the LCM documentation highlighting the type of file that is created for each artifact

The xml files are tagged with an object id that relates to the id in the planning repository.

Example of xml dimension export.

To import all the artifacts back into the target application you need the target HSS to be able to access the exported artifacts.

Now this is where I am not sure if this is the quickest solution but I have not come across another way of doing it yet. I had to create an xml import definition, it is pretty much the same as the export definition export but you have to swap around the source and target sections. You can also enter the path to the artifacts in the file.

An example of how the file should look is at

http://download.oracle.com/docs/cd/E12825_01/epm.111/epm_lifecycle_management/apes09.htm

The sample files are also available in \Hyperion\common\utilities\LCM\9.5.0.0\Sample

To import just log into HSS on the target system go to Administration > Edit/Execute Migration, select the import definition you created and follow the wizard.

You need to be aware of the order of how artifacts should be imported if you are not running a full migration, this can be found in the Best Practices section

http://download.oracle.com/docs/cd/E12825_01/epm.111/epm_lifecycle_management/apes06.htm

I have not a separate named target environment so I had to test the import on the same environment; it is something I am going to test in the next week or so.

Once you have selected the import definition you will be greeted again with the wizard and the process is pretty much the same as the export, the import ran successfully.

There is no reason the import shouldn’t run successfully on a separate target machine though there is one area I will be interested in when I test it and that is around business rules, the created xml export file looks the same as in previous versions and it hard codes the server name and the native SID string which change across servers, I know it has been a pain to import them in the past. I wonder if it will handle it any differently and correct the server and the native string. I will update the blog once I have found the answer.

Business rule xml example

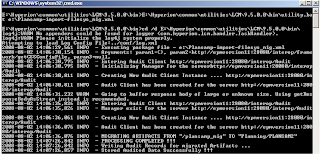

So what about running the migration from command line well it couldn’t be simpler, just run utility.bat and point to the definition file.

No problems there, you can view the status and logs in HSS just like if you ran it from HSS console.

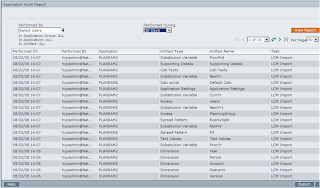

Quickly back to auditing that I enabled at the beginning, the auditing is broke into three areas Security, Artifact and Config. By going to Administration > Audit Reports > Artifact Reports will produce a report which can be filtered by user and when it was performed, if you require to do further analysis you can export the report to CSV.

If you ever need to query this data further then it is stored in a table named SMA_AUDIT_FACT in the HSS repository.

From what I have tested today I am really impressed, I understand it has not been an extensive test but it is like a breath of fresh air from the pain of migrations in the past and is going to be beneficial for so many users. It’s about time!!!

The above post really help me lot.Thanks very much John.

ReplyDeleteWe had an application which we needed to migrate from planning 11.1.1.3 to 11.1.1.2.

So we had to perform an application to system migration which we able to perform successfully due to this post.

Thanks very much once again

Thanks John for this very usefull post.

ReplyDeleteWhat are your experiences with the LCM utility? We are just playng around with this tool, but it takes 30 seconds until it starts the export/import which is than performed in another 10 seconds. Do you know about any option how to speed this up?