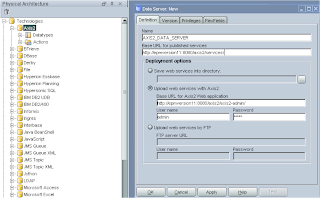

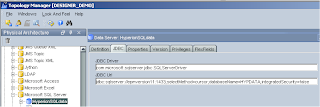

The first step of the configuration is to go into the Topology Manager; by default there will be an Axis 2 technology already installed, a Data Server will need to be inserted to set up the connection information to the axis2 web application.

The name, base URL’s and account details are required, the default account for axis2 is admin/axis2.

The upload URL is the location where ODI will upload an aar (axis2 archive file) with compiled information about the data store.

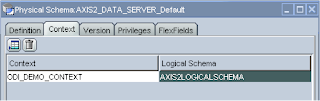

Once this applied set up a logical schema and apply it to a context and that is all that is required in the Topology Manager.

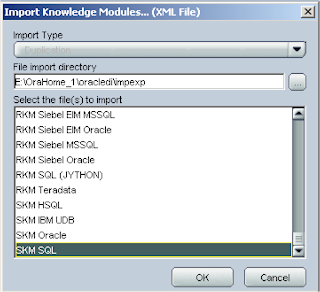

In the designer you will need to import a SKM (Service Knowledge Module), the SKM you choose depends on the underlying technology your Data Store uses that you are going to generate a Data Service on.

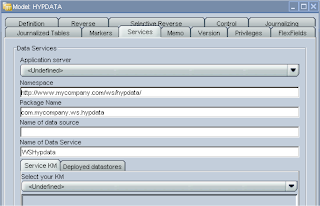

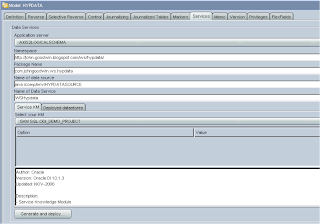

Now select the model that you want to apply a data service to and edit, select the services tab.

In the application server dropdown there should be the name of the logical you created.

The Namespace and Package Name you enter is your choice.

The Name of the data source is important, as it will be used later on in the configuration of the web server; the data source name is used to map JBDC connection details to your data store, when using data services the JDBC information for the data store is not picked up from ODI and has to manually entered into the web applications configuration files.

The name has to start java:/comp/env/<name of data source>

The name of the Data Service is once again entirely your choice; you should end up with something like the following.

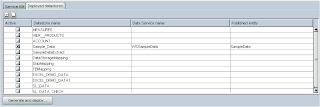

If you select the “Deployed datastores” tab you can pick which Data Stores you want to become part of the Data Service, a important prerequisite is that the Data Store has a primary key applied otherwise the generation of the Data Service will fail.

If you have not set up the ODI_JAVA_HOME environment variable to point to a JDK instead of a JRE you will get the following error.

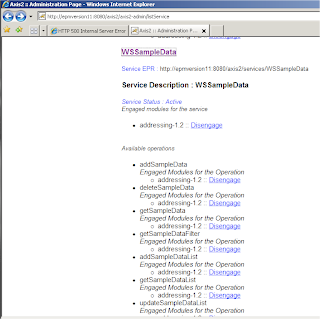

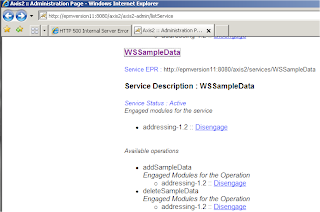

All being well the Data Service will be generated and uploaded to the axis2 web application.

You can check it has been deployed correctly by listing available services in the axis2 web application

With each deployment of a Data Service there are a number of available operations such as add, delete, view Data Store through a Web Service.

Now this where many experience difficulties with Data Services, most rightly so assume that is the configuration complete but as I mentioned the Data Services do not pick up the data source connection details to the repository from ODI, these details need to added to the configuration files in the web application server.

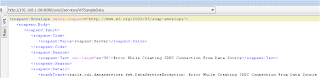

If you try use a Web Service without configuring the data source then you will experience an error like.

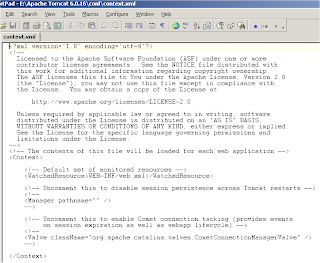

The location of the files will depend on the application server you are using, in this example I am using Tomcat and the first file to update is context.xml that is located in the conf directory.

In the file additional information needs to be added between <Context>

The format of the additional information is :-

<Resource name=" " type="javax.sql.DataSource" driverClassName=" " url=" " username=" " password="" maxIdle="2" maxWait="-1" maxActive="4"/>

The resource name relates to the Data Source name that was defined in the Services tab of the model.

The driveClassName is JDBC driver name, this will depend on what technology you are using.

The url is the connection details to the database.

If you are unsure of the details you just need to go back into topology manager and check the Data Server

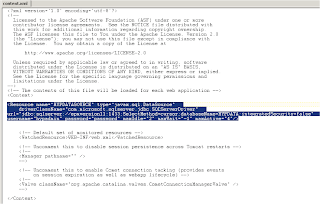

An example of a completed context.xml is :-

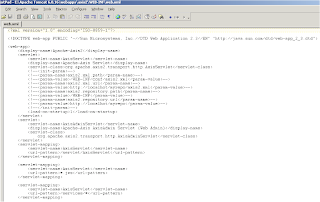

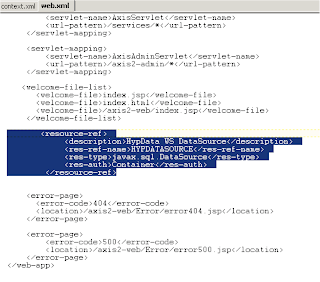

The next file that requires updating is web.xml that is located in the axis2 web application directory.

The information that requires adding can be placed anywhere between <web-app>

It basically information that tells the axis2 web application the name of the datasource to map to the context.xml and is in the format of :-

<resource-ref>

<description> </description>

<res-ref-name> </res-ref-name>

<res-type> </res-type>

<res-auth> </res-auth>

</resource-ref>

So in my example when it is has completed it looked like.

Once the configuration files have been completed then the web application server will require a restart.

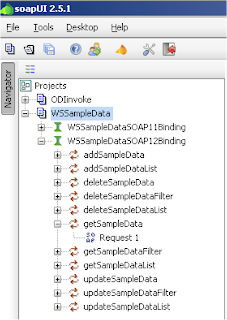

To test the Data Services I am going to use soapUI again, if you have not downloaded it I recommend doing so as it is perfect for testing any sort of web service, it is full of functionality and free.

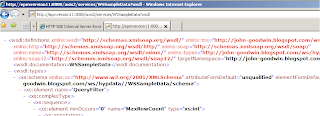

To test the service you will need to point soapUI to the WSDL, you can get the URL from the axis2 service list

Selecting the service will point to the WDSL address.

Entering the WDSL URL into soapUI will retrieve the available operations.

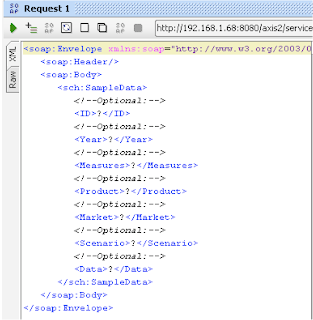

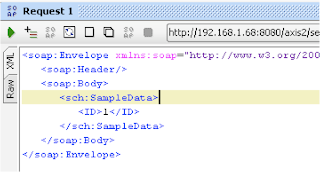

So lets take one of the operation getSampleData as an example.

The soap request takes any of the columns from the DataStore, the primary key column is mandatory even though it says optional.

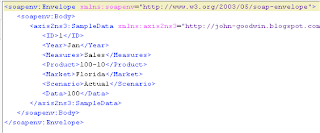

Running the SOAP request will retrieve data from the Data Store against the defined ID number.

So with the different operations you can have all the functionality of deleting, adding and querying records.

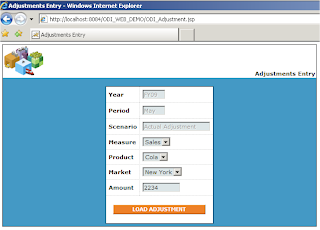

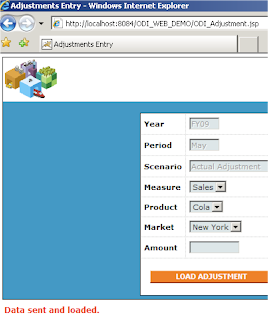

A simple example of how this could be used is say you have users that enter manual adjustments into essbase cube, the current process is that the user sends in the data and this has to be loaded into the cube and then rolled up, this process needs to be automated and controlled.

Well as long as they have http access then a web page can be created.

Once the user has entered the information and submitted, the data could be sent directly into a database table using the data service I have just gone through (using the addSampleData operation), to get an idea on how to write Java code to do this have a look at this blog.

So how about automating the process loading the data into essbase, well if you read back to a previous blog I go through exactly how to do it by using CDC on a Datastore, with CDC any changes to a Datastore can be monitored and when a threshold is met then an interface could be run that loads data from the table into essbase, finally you could use the KM option to run a calc script after the data load to roll up part of the cube.

Now you will have an extremely simple automated adjustment load process.

No comments:

Post a Comment

Note: only a member of this blog may post a comment.