I was looking at the new features for the planning Outline load utility and the following caught my attention -”Administrators can now use the Outline Load utility to export metadata to a relational data source.”

Going back to 11.1.2.2.300 there were quite a few enhancements to the outline utility:

/RED:weblogic.jdbc.sqlserver.SQLServerDriverGoing back to 11.1.2.2.300 there were quite a few enhancements to the outline utility:

- Import metadata and data from a relational data source.

- Optimize command lines by storing command line arguments in a command properties file. For example, if you use a command properties file to run the same commands on multiple applications, you need only change a parameter in the command line for each import. This allows you to bundle switches for a common application. It also makes command lines shorter and easier to manage, and enhances readability and ease of use.

- Export data export to a flat CSV file

I blogged about the 11.1.2.2.300+ features in three parts which can be found here, here and here.

I double checked and the ability to export metadata to a relational data source was not available in 11.1.2.2.300 so I thought it would be worth going through this new piece of functionality in 11.1.2.3, now in my previous blogs I covered some areas such as using a command properties file which I am not going to cover again so if you have not done already it is probably worth having a read through the three previous blogs.

The outline load utility has certainly become a powerful tool over the years since its first appearance in the early days of version 11, the only feature missing with relational data sources is the ability to export data (only to a file at present) though I am sure that functionality won’t be too far away.

If you look at the new features in 11.1.2.3 with classic planning applications and add in the functionality available with the outline load utility or ODI then I would certainly question the need to go down the EPMA route, if you are already in EPMA mode then keep enjoying the pain or maybe it is time to turn back from the dark side :)

Anyway before jumping in and exporting metadata then there are a number of new parameters available which need to be understood, many are similar to the ones used when importing except the parameter contains an E (export) instead of I (import).

/ER: RelationalConnectionPropertiesFileName

This parameter defines the properties file which will hold all the database connection information for the export, it is the export equivalent to the /IR parameter which I previously covered and is used for importing.

/ERA

If you don’t specify the /ER parameter then you can use the /ERA parameter which basically uses the same database connection information as the planning application that the export is running against, personally I would want to keep import/export type data separate to the planning application database.

/REC:catalog

This parameter I don’t believe is important if the database target is Oracle but for SQL Server it should be database name.

/RED:driver

The parameter is the JDBC driver that will be used for the connection to the relational database.

For Oracle use: /RED:oracle.jdbc.OracleDriver

For SQL Server:

I double checked and the ability to export metadata to a relational data source was not available in 11.1.2.2.300 so I thought it would be worth going through this new piece of functionality in 11.1.2.3, now in my previous blogs I covered some areas such as using a command properties file which I am not going to cover again so if you have not done already it is probably worth having a read through the three previous blogs.

The outline load utility has certainly become a powerful tool over the years since its first appearance in the early days of version 11, the only feature missing with relational data sources is the ability to export data (only to a file at present) though I am sure that functionality won’t be too far away.

If you look at the new features in 11.1.2.3 with classic planning applications and add in the functionality available with the outline load utility or ODI then I would certainly question the need to go down the EPMA route, if you are already in EPMA mode then keep enjoying the pain or maybe it is time to turn back from the dark side :)

Anyway before jumping in and exporting metadata then there are a number of new parameters available which need to be understood, many are similar to the ones used when importing except the parameter contains an E (export) instead of I (import).

/ER: RelationalConnectionPropertiesFileName

This parameter defines the properties file which will hold all the database connection information for the export, it is the export equivalent to the /IR parameter which I previously covered and is used for importing.

/ERA

If you don’t specify the /ER parameter then you can use the /ERA parameter which basically uses the same database connection information as the planning application that the export is running against, personally I would want to keep import/export type data separate to the planning application database.

/REC:catalog

This parameter I don’t believe is important if the database target is Oracle but for SQL Server it should be database name.

/RED:driver

The parameter is the JDBC driver that will be used for the connection to the relational database.

For Oracle use: /RED:oracle.jdbc.OracleDriver

For SQL Server:

/RER:url

The parameter is the JDBC URL to be used for the connection to the relational database.

For Oracle the format is: jdbc:oracle:thin:@[DB_SERVER_NAME]:DB_PORT:DB_SID

So for example that could be: /RER:jdbc:oracle:thin:@[fusion11]:1521:FUSION

For SQL Server the format is:

jdbc:weblogic:sqlserver://[DB_SERVER_NAME]:DB_PORT

An example being

/RER: jdbc:weblogic:sqlserver://[fusion11]:1433

/REU:userName

This is the user name to connect to the relational database.

/REP:password

The password for the database connection, this is unencrypted the first time it is used in a properties file and once an export has completed the file will have been updated with an encrypted version.

/REQ:exportQueryOrKey

This can either be the SQL export query to be run or it can be used to designate a key which will hold the SQL query, I will cover this in more detail shortly.

So those are the main new parameters to use when exporting metadata and if you combine them with the existing parameters you should be ready to start putting it all together.

I am now going to go through a few examples of extracting account metadata from the sample planning application.

First I start out with a batch script which will call the outline load utility and set the properties and password file to use.

OutlineLoad –f:E:\PLAN_SCRIPTS\password.txt /CP:E:\PLAN_SCRIPTS\metaextract.properties

The –f parameter defines the password file to use and the /CP parameter defines the property file to use which will hold all the remaining parameters.

The properties file metaextract.properties contains the following information:

The /ER parameter defines the database connection properties file and instead of using two property files I have pointed them all to the same file.

I am extracting to an Oracle database and the PLANSTAGE schema.

As explained earlier the password used in the /REP parameter is not encrypted at first.

Once the utility has been run the file should updated and the password encrypted.

Now on for the most important parameter /REQ which defines the SQL query or key.

The export query follows a strict format so don’t be thinking you can write your own elaborate SQL.

INSERT INTO <tableName> (column1, column2, ...) VALUES (property1, property2,...)

<tableName> is the name of the table into which the exported metadata will be inserted into.

(column1, column2, …) is an optional list of column names the metadata will be inserted into.

(property1, property2, …) are member property names to be extracted.

So let’s take a simple example and extract the member and parent information.

In this example I have used a key for the /REQ parameter but the following is perfectly valid as well:

Instead of specifying the dimension name like you would normally when using the utility you use “Member”.

The query will extract the Member and Parent values and insert them into a table called PLAN_ACC, the number of columns in the table will need to match the number of properties being extracted.

The name of columns in this case does not matter just as long as there are the same number of columns, the order of how they populated is defined by the query so in my example “Member “ then “Parent”.

Before running make sure the table and columns have been created as the utility will not do this and fail.

If you specify an incorrect table name or the table (this is based on Oracle):

Incorrect number of columns in the table:

If the table has been created correctly then the export should be successful and the table populated.

It is worth pointing out that the table will not be cleared out before the export runs and unfortunately it doesn’t look like there is an option yet to do so, hopefully it will be added in the future as all it will require is another parameter with a true/false option but until then you will need to clear out the table with your own preferred method.

If the table has additional columns then you can define which columns to insert to in the query.

If you want to extract all the available properties then you can use the <properties> option in the query instead of specifying each property

Once again make sure the table has the same number of columns as properties that will be exported, if you are not sure how many columns are required then just run the script and check the output log as it will display all the properties that are being exported.

When the correct number of columns has been defined in the table the export should be successful.

Even the formulas are exported quite cleanly which I know could a bit of a pain when exporting to csv

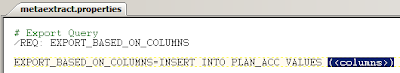

There is another nice piece of functionality which allows you to export member properties based on the columns in the target table.

So say I had the following columns in a table: “Member”,”Parent”,”Alias: Default”

The column names must match the properties in planning.

In the query the <columns> option can be used.

When the export runs the utility will check the columns in the target table and then match that to the properties to export.

So there we have it yet another great additional to the Outline Load utility.

I keep finding myself referencing this post for the details on /REQ. Thanks for the detailed post!

ReplyDeleteThanks for the post. I used OTLLOAD utility for building dimensions from File but not for export to relational table. This article is certainly helpful but couldn't open some of the screenshots in firefox/Chrome/Iexplorer.

ReplyDeletePlease help.

Apparently this only works for Metadata and not Actual cube data... we've tried to port it using REQ to match the EDD and no luck....Any updates?

ReplyDelete